*skip to step 3 if you just set up a template in the previous article.

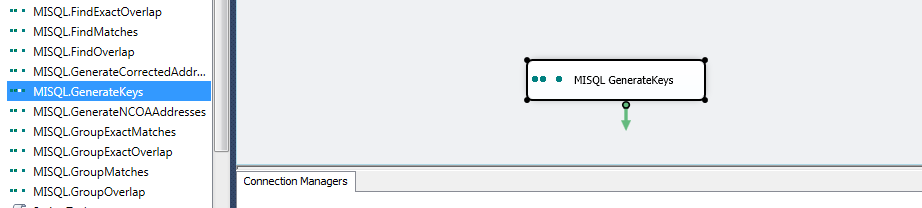

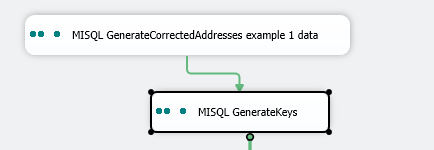

1. Launch SSDT, first create a new integration services project, then drop a key generation task onto the package

2. rename the task as a best practice, when there are multiple tasks with similar names, it may be harder to keep track later

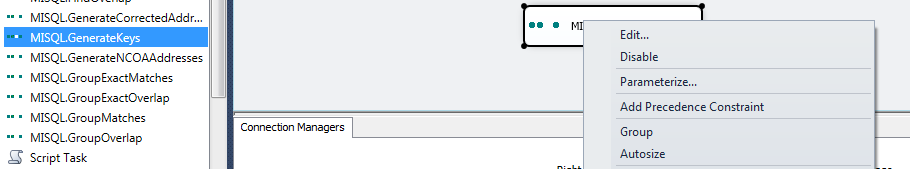

3. double click on the task, or right click and choose edit to open the task editor

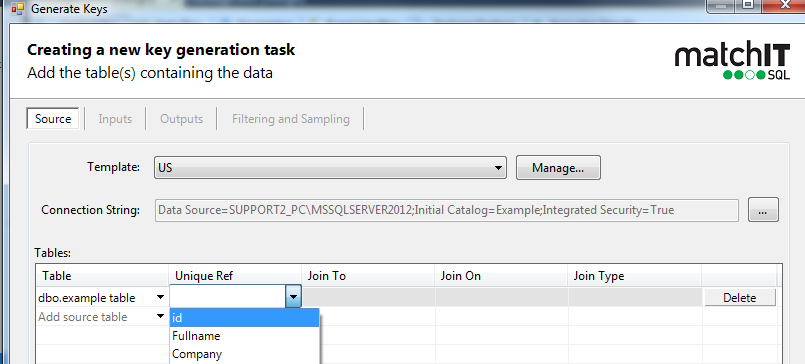

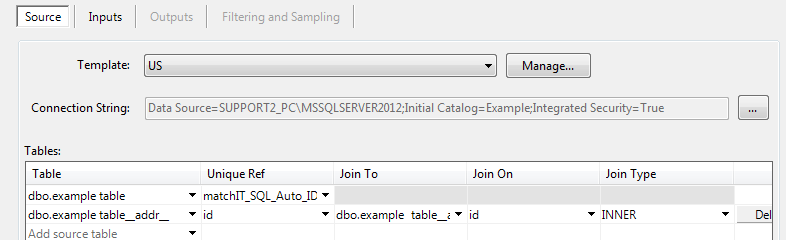

4. Choose your template, ideally your own one discussed in the previous article

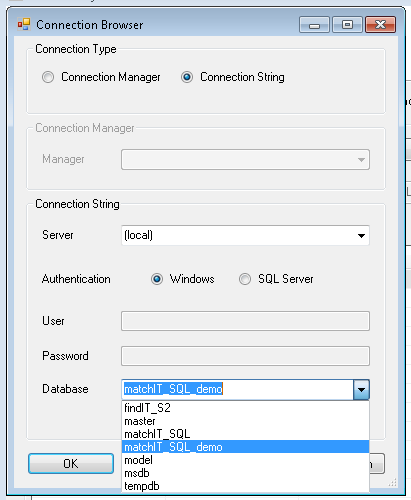

5. Choose ellipses to launch the connection browser

6. Select a server and database, click test connection, then click ok

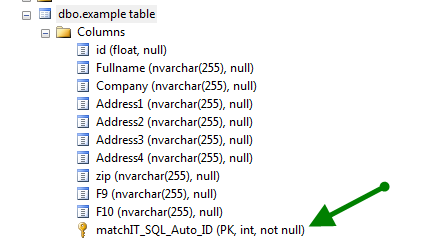

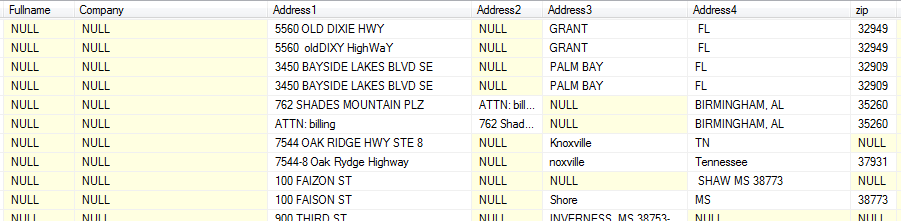

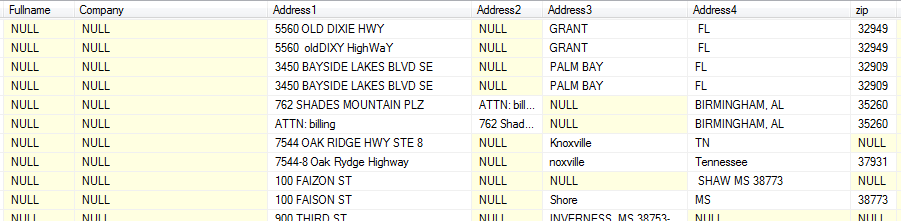

7. go to SSMS and look at the table, its best to take a look and understand your data

It’s also a best practice that all your tables you point mSQL to should have a non-nullable ID column with a primary key constraint against them.

8. go back to SSDT

Choose the table, and choose the ID, if you table doesn’t already have an ID (but ideally it should), you can otherwise choose AUTO, and it will create a column called matchIT_SQL_Auto_ID like in above screenshot

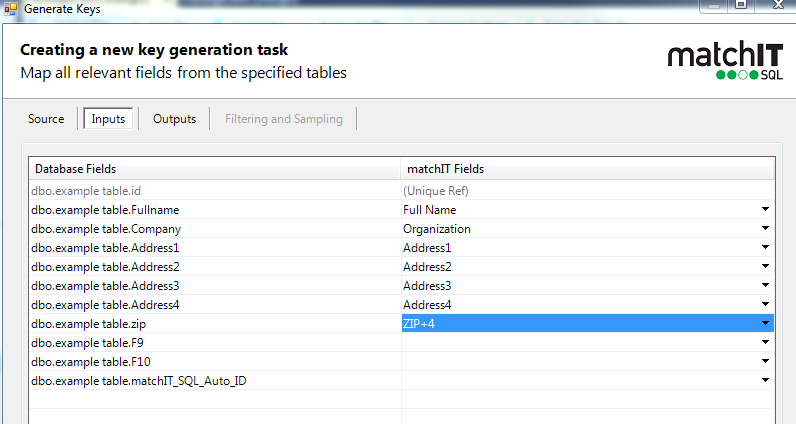

9.

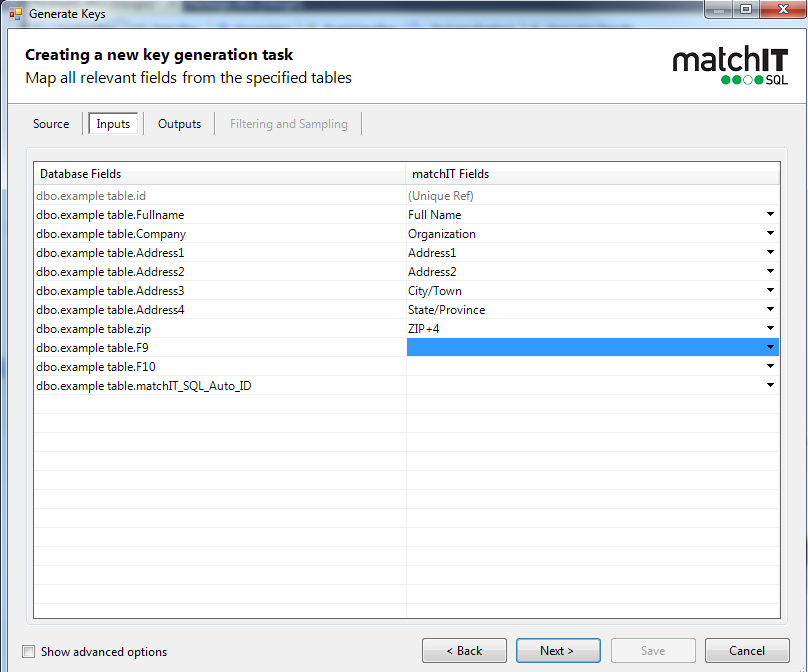

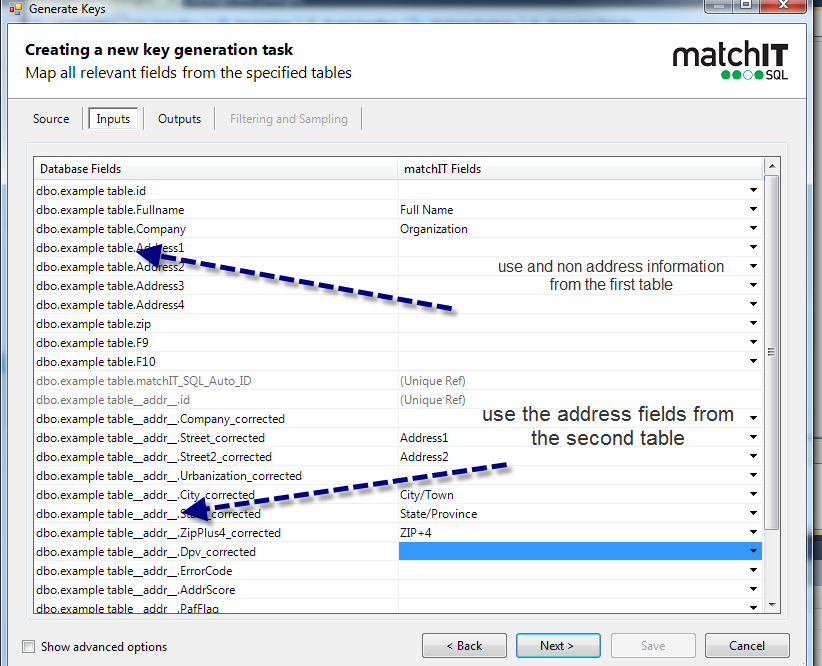

click next, and map the inputs, the most common table layout is fullname, company, two address lines, city, state, zipplus4, if your table isn’t like that, it’s OK, we can handle it however it comes in, but make sure to keep reading.

notice the fields on the left are from your source table, the fields on the right are the API mappings

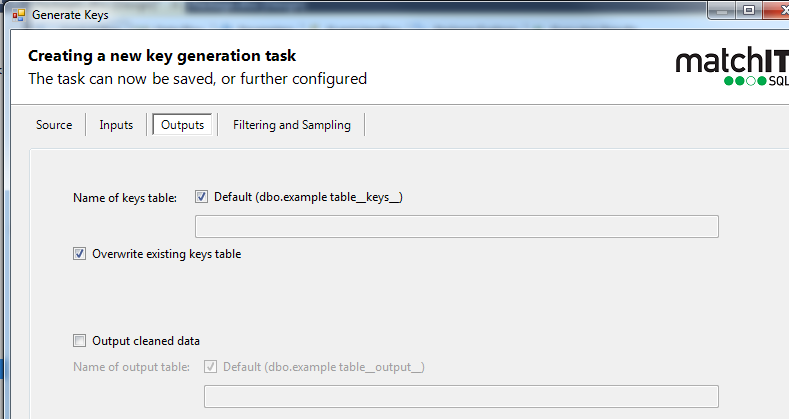

10. Then you would just click next, then save

Other common variations:

A.

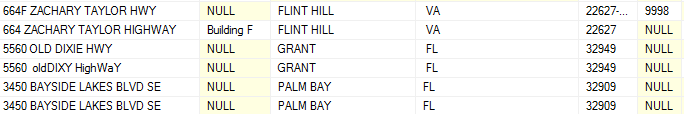

In below example – we can see that the city isn’t consistently in the same column, in cases like this we would suggest mapping them as address lines instead of specifying city/state to one column

Fullname = fullname

Company=company

Address1=adddress1

Address2=adddress2

Address3=adddress3

Address4=adddress4

Zip = zip+4 (even if your data contains a mix of 5 digit and 9 digit zips, you should still use Zip+4)

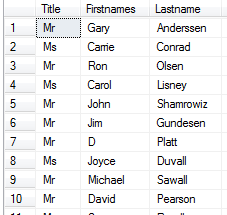

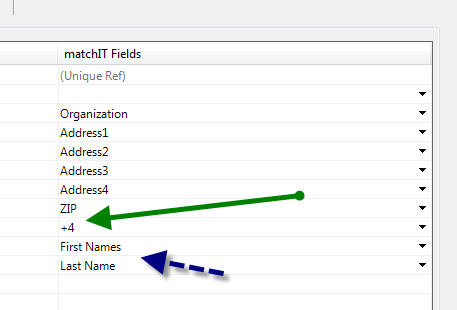

B. What if my name or zip is parsed

Instead of fullname, you would use first names, and lastname, you can also use initials or middlenames for middle name, prefix for the title (Mr or Mrs) or suffix

Instead of Zip+4, you would use zip, and +4

C. What if I ran address validation before, or have my addresses in a separate table

In that case, you would just connect the address validation task to key generation, then when you open up key generation, the connection would be read in from the previous task

You would set up your tables like below

On the inputs, you would use the address corrected table for any address inputs, you may need to scroll down to see the address table if your source has a lot of columns.

D. What If you have extra fields like a birth date, telephone, email, tax ID, invoice number that you may need to incorporate into matching or included in your output table?

There are already options for email, DOB, Telephone, any other fields would fall under a “custom Field”, you can map up to 9 custom fields. If you need them incorporated into the matching, then make sure to map them now. You can always come back to the task and change mappings later.